- Retrait en 2 heures

- Assortiment impressionnant

- Paiement sécurisé

- Toujours un magasin près de chez vous

- Retrait gratuit dans votre magasin Club

- 7.000.0000 titres dans notre catalogue

- Payer en toute sécurité

- Toujours un magasin près de chez vous

Description

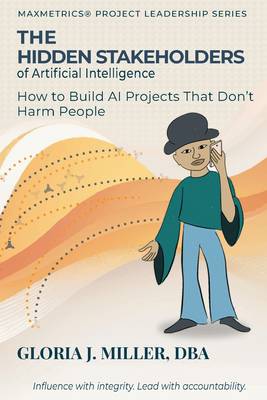

The people most harmed by AI systems are usually the ones no one thought to include.

Artificial intelligence now shapes hiring decisions, loan approvals, fraud detection, healthcare, and law enforcement. When these systems fail, responsibility is rarely clear. Projects end, teams move on, and the individuals affected may not even know that an algorithm influenced the decision.

The Hidden Stakeholders of Artificial Intelligence identifies the root cause: passive stakeholders - people who may be seriously affected by an AI system but have no influence over the project that created it. Their interests are invisible during early design decisions. Yet they are the ones who bear the consequences when systems fail.

This book provides project leaders, executives, and governance teams with a practical method for finding those stakeholders before harm occurs. At its center is the Four Tests framework - power, legitimacy, urgency, and harm - which extends classical stakeholder theory to surface the groups most projects overlook. Supporting tools include a stakeholder responsibility workshop, a harm taxonomy, impact-harm scoring, and a lifecycle-weighted governance model, all ready to apply.

Five real cases - involving a pharmacy's facial recognition system, AI training data and copyright, an automated trading algorithm, an auto loan case, and AI-generated court citations - demonstrate exactly how governance gaps emerge and what they cost.

Written by a researcher and practitioner with more than twenty years of AI project experience, this is not a book about the risks of artificial intelligence in the abstract.

It is a guide for the people building it.

Spécifications

Parties prenantes

- Auteur(s) :

- Editeur:

Contenu

- Nombre de pages :

- 232

- Langue:

- Anglais

Caractéristiques

- EAN:

- 9780998348490

- Date de parution :

- 02-04-26

- Format:

- Livre broché

- Format numérique:

- Trade paperback (VS)

- Dimensions :

- 102 mm x 152 mm

- Poids :

- 185 g

Seulement chez Librairie Club

Les avis

Nous publions uniquement les avis qui respectent les conditions requises. Consultez nos conditions pour les avis.