- Retrait en 2 heures

- Assortiment impressionnant

- Paiement sécurisé

- Toujours un magasin près de chez vous

- Retrait en 2 heures

- Assortiment impressionnant

- Paiement sécurisé

- Toujours un magasin près de chez vous

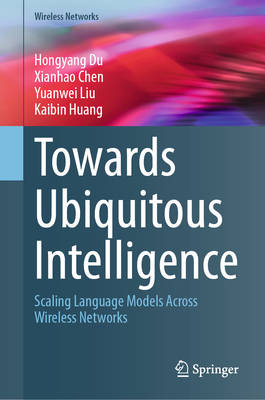

Towards Ubiquitous Intelligence

Scaling Language Models Across Wireless Networks

Hongyang Du, Xianhao Chen, Yuanwei Liu, Kaibin HuangDescription

This book systematically examines the integration of language models with wireless networks from both architectural and algorithmic perspectives. It begins with the evolution of language models and wireless network requirements, followed by core concepts such as model scaling, training, inference, and the resource constraints shaping their deployment. Building on this foundation, the book investigates LLMs in cloud environments and SLMs for edge computing, focusing on compression, distillation, and efficiency under constrained conditions. The central part of the book is structured around two complementary directions. The first, network-aided collaborative language models, explores how cloud and edge language models can jointly support distributed inference through model partitioning, collaborative training, and adaptive coordination, considering synchronization and communication constraints in wireless networks. The second, language model-aided network optimization, focuses on using language models as decision-making agents to improve network performance, covering protocol optimization, expert routing, and cross-layer integration. These technical developments are grounded through detailed application scenarios and case studies, analyzing trade-offs between accuracy, latency, and resource consumption. The book concludes with forward-looking discussions on architecture, deployment strategies, and research challenges, serving as a comprehensive reference for researchers and practitioners at the intersection of wireless networks and artificial intelligence.

Spécifications

Parties prenantes

- Auteur(s) :

- Editeur:

Contenu

- Nombre de pages :

- 163

- Langue:

- Anglais

- Collection :

Caractéristiques

- EAN:

- 9783032201935

- Date de parution :

- 19-05-26

- Format:

- Livre relié

- Format numérique:

- Genaaid

- Dimensions :

- 155 mm x 235 mm

Seulement chez Librairie Club

Les avis

Nous publions uniquement les avis qui respectent les conditions requises. Consultez nos conditions pour les avis.